Outage is going to affect every public cloud service, no matter what precautions you take. You can design fail-over systems which have low fixed costs instead of investing in an on-site disaster recovery system that targets to eliminate all individual points of failure. When a datacenter or Availability Zone in the AWS suffers a failure, the application however does not become inaccessible. When you have a traditional IT set-up, you can replicate the important tiers in order to make the data center resilient. This is obviously a very costly solution and the worst part is that it does not even guarantee resiliency. There are many additional small steps which businesses can take to make the whole system resilient and below are a few of the key strategies:

It may be advantageous to have a loosely coupled system. You can separate components so that none has knowledge of exactly how the other is working. In short, when the system is loosely connected, scalability is better. This method will keep the components separate from one another and will remove all internal dependencies. This in turn will ensure that when any component fails also, other components are not aware of it. The end result is a far more resilient set-up whenever there are failures of individual components.

• To do this, you can use vanilla templates and set deployment times by configuration management. This will also allow you to control the instances better and deploy Cloud security services updates if required. To do that, you can simply touch the code on the Puppet manifest instead of having to patch all instances manually. So, the new instances will no longer be dependent on the template and you can eliminate risks of system failure, allowing instances to be deployed faster.

• If you use queues for connecting components, systems are better able to support the spillovers taking place when the workload spikes. By placing SQS within layers instances can be easily scaled up on their own depending upon length of queue.

• You should try to make applications stateless. Developers have used many methods for storing user session data and this makes it hard for applications to scale up seamlessly when such data is in the database. When you have to store state, you should save it on client. This will help to cut down on the load and also remove dependencies on the server.

• You can also seek to distribute the instance over many AZs and Elastic Load Balancers or ELBs should spit the traffic across multiple healthy instances for which you should control the criteria.

• The best method is to store static data on S3 rather than going to EC2 nodes. This lowers the chances of the EC2 nodes failing and also cuts down on costs because you get to run the leaner EC2 types of instance.

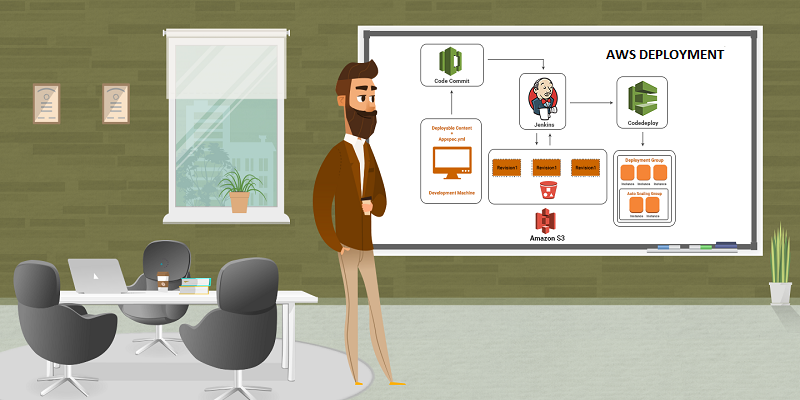

Another effective way to make the AWS deployment stronger is by automating the infrastructure. This is because the very presence of humans implies that there can be failure. You need to deploy an auto scaling infrastructure which is self-healing by nature. This will dynamically build and destroy instances. It will also assign the right resources and roles to the instances. But all this needs large upfront costs. This is why if you can automate the infrastructure from before you can cut down on costs of installation and maintenance later on.

A third convenient way to make the Amazon Web Services India deployments more resilient is to build mechanisms in the first place for ensuring that the system remains safe regardless of what happens. This is going by the assumption that things are likely to go wrong. So, engineers have to anticipate what can go wrong and then seek to correct those deficiencies. For instance, Netflix creators have built a whole squadron of engineers who will be focusing completely on controlled failure injections. To build a fail-proof environment you have to keep deploying the best methods and then monitor or update these continuously.

• Performance testing is one such way of correcting deficiencies. It is usually overlooked but is very crucial for any application. You must put the database to such stress tests right from the designing phase and also from multiple locations to see how the system is going to work in the real world.

• You should also use the Simian Army (used by Netflix) which comprises of many open-source testing tools to see if your system is resilient enough to withstand an attack. Using the tools, engineers can test security, resiliency, reliability and recoverability of cloud services.

The truth is that deployment of a robust and resilient infrastructure will not only happen if you can follow some to-do steps. It needs a continuous monitoring of many processes. There has to be a continuous focus on optimizing the system for automatic failovers using both native tools and third-party tools.

Read More at : 3 Steps for building Scalable and Resilient, AWS Deployments

Live Chat

Live Chat